- Hadoop is an important Big Data operating system. In fact, it is evaluated for parallel processing utilizing structured and unstructured data, using low hardware costs. Whereas Hadoop’s work is concerned, it's done in batch, not in real-time, duplicating the data through the network respectively.

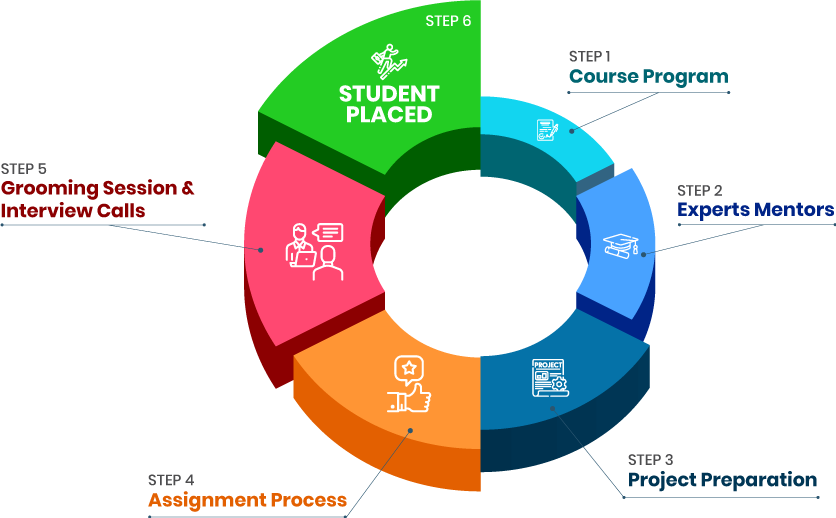

- By deep-delving into its topic, you will surely get to know how Big Data Hadoop helps in storing a huge mass of data efficiently. So, if you are looking for any sort of professional training, then Croma Campus is the genuinely the best place for you to have a detailed insight of Big Data Hadoop Training in Delhi. Here, you will not only gain theoretical information, but will also receive placement assistance.

- Big Data Hadoop is one of the most demanding courses belonging to the IT domain. For beginners, Big Data Hadoop Training in Delhi might seem a bit difficult one's, but with adequate guidance, you will surely end up understanding every part of this course. By getting in touch with Big Data Hadoop Training Institute in Delhi, you will come across the exact topics and sub-topics in an explained manner.

At the initial level of the course, our trainers will help you know its basic fundamentals.

Further, you will also receive sessions concerning Hadoop Architecture Distributed Storage (HDFS) and YARN, What Is HDFS, Need for HDFS, Need for HDFS, etc.

Our trainers will also help you know the whole Data Replication Topology, HDFS Command Line, command line, Data Ingestion into Big Data Systems and ETL, distributed processing, Hive SQL over Hadoop MapReduce, basics of Functional Programming and Scala, etc.

Here, you will also get a chance to imbibe new skills concerning this course.

In a nutshell, we can say, you will end up imbibing every detail of this course in a detailed manner.

- Whereas salary package is concerned, then it's eventually one of the well-paid fields. By obtaining a legitimate accreditation of Big Data Hadoop Training in hand, you will end up obtaining a decent salary package.

At the initial level, you will earn around Rs. 3 Lakh to Rs. 3.5 Lakh, which is quite good for freshers.

Likewise, an experienced Big Data Hadoop Developer earns Rs. 11.5 Lakh annually.

Moreover, by obtaining more work experience along with the latest skills, and trends your salary structure will expand.

By taking projects as a freelancer, you will make some good additional income also.

- To be precise, Hadoop is one sort of technology that offers numerous opportunities to build and grow your career. In fact, Hadoop is genuinely one of the most valuable skills to imbibe today that can help you obtain a rewarding job. If your interest lies in this direction, choosing this field will be suitable for your career in numerous ways.

By obtaining for its legit training from a reputed educational foundation, you will turn into a knowledgeable Big Data Hadoop Developer.

Well, withholding a proper accreditation of Big Data Hadoop Developer, you will be offered an excellent salary package right from the beginning of your career.

Getting to know each side of Big Data Hadoop Developer will also push you forward to come up with innovative applications.

Knowing this skill will eventually enhance your resume.

You will always have numerous jobs offers in hand.

- A skilled Big Data Hadoop Developer is responsible for executing a lot of tasks, and to smoothly execute those tasks, you will have to imbibe those skills adequately as well. By getting in touch with a decent Big Data Hadoop Training Institute in Delhi, you will get the chance to understand each role in quite an explained manner.

- Some of the major roles are mentioned below.

Firstly, you will have to build, imply and maintain Big Data solutions.

In fact, you will also have to execute database modeling and development, data mining, and warehousing.

You will also have to design and deploy data platforms across numerous domains assuring operability.

Our trainers will also help you to experience the Hadoop ecosystem (HDFS, MapReduce, Oozie, Hive, Impala, Spark, Kerberos, KAFKA, etc.).

At the same time, you will also have to transform data for meaningful analyses and enhance data efficiency.

Often you will have to execute the testing scripts and analyze the results.

- If you will deep delve into its topic, then you will find various reasons to get started with Big Data Hadoop Training in Delhi. One of the significant one's its ability to handle huge data.

By getting started with this specific course, you will end up strengthening your base knowledge.

You will know the various features and offerings of this technology by getting in touch with a well-established Big Data Hadoop Training Institute in Delhi.

You will also know about building a new sort of application and implying some latest features.

You will end up obtaining some untold, and hidden facts from the Big Data Hadoop Training in Delhi respectively.

- At the moment, you will find Big Data Hadoop Developers extensively in demand, and yet the grant is low. If you are also planning to construct your career in this field, getting started with Big Data Hadoop Training in Delhi will be a suitable move for your career. So, getting associated with a decent Big Data Hadoop Training Institute in Delhi will be beneficial for you to secure a higher position.

By joining Croma Campus, you will get the opportunity to get placed in your choice of companies post enrolling with the Big Data Hadoop course.

Our trainers will also help you in building an impressive resume.

They will also suggest you some effective tips to pass the interviews.

- Related Courses to Big Data Hadoop Training in Delhi

Why you should get started with the Big Data Hadoop Course?

By registering here, I agree to Croma Campus Terms & Conditions and Privacy Policy

Course Duration

Course Duration

60 Hrs.

Flexible Batches For You

19-Apr-2025*

- Weekend

- SAT - SUN

- Mor | Aft | Eve - Slot

21-Apr-2025*

- Weekday

- MON - FRI

- Mor | Aft | Eve - Slot

16-Apr-2025*

- Weekday

- MON - FRI

- Mor | Aft | Eve - Slot

19-Apr-2025*

- Weekend

- SAT - SUN

- Mor | Aft | Eve - Slot

21-Apr-2025*

- Weekday

- MON - FRI

- Mor | Aft | Eve - Slot

16-Apr-2025*

- Weekday

- MON - FRI

- Mor | Aft | Eve - Slot

Course Price :

Want To Know More About

This Course

Program fees are indicative only* Know moreTimings Doesn't Suit You ?

We can set up a batch at your convenient time.

Program Core Credentials

Trainer Profiles

Industry Experts

Trained Students

10000+

Success Ratio

100%

Corporate Training

For India & Abroad

Job Assistance

100%

Batch Request

FOR QUERIES, FEEDBACK OR ASSISTANCE

Contact Croma Campus Learner Support

Best of support with us

CURRICULUM & PROJECTS

Big Data Hadoop Training

- Introduction to Big Data & Hadoop

- HDFS

- YARN

- Managing and Scheduling Jobs

- Apache Sqoop

- Apache Flume

- Getting Data into HDFS

- Apache Kafka

- Hadoop Clients

- Cluster Maintenance

- Cloudera Manager

- Cluster Monitoring and Troubleshooting

- Planning Your Hadoop Cluster

- Advanced Cluster Configuration

- MapReduce Framework

- Apache PIG

- Apache HIVE

- No SQL Databases HBase

- Functional Programming using Scala

- Apache Spark

- Hadoop Datawarehouse

- Writing MapReduce Program

- Introduction to Combiner

- Problem-solving with MapReduce

- Overview of Course

- What is Big Data

- Big Data Analytics

- Challenges of Traditional System

- Distributed Systems

- Components of Hadoop Ecosystem

- Commercial Hadoop Distributions

- Why Hadoop

- Fundamental Concepts in Hadoop

- Why Hadoop Security Is Important

- Hadoop’s Security System Concepts

- What Kerberos Is and How it Works

- Securing a Hadoop Cluster with Kerberos

- Deployment Types

- Installing Hadoop

- Specifying the Hadoop Configuration

- Performing Initial HDFS Configuration

- Performing Initial YARN and MapReduce Configuration

- Hadoop Logging

- What is HDFS

- Need for HDFS

- Regular File System vs HDFS

- Characteristics of HDFS

- HDFS Architecture and Components

- High Availability Cluster Implementations

- HDFS Component File System Namespace

- Data Block Split

- Data Replication Topology

- HDFS Command Line

- Yarn Introduction

- Yarn Use Case

- Yarn and its Architecture

- Resource Manager

- How Resource Manager Operates

- Application Master

- How Yarn Runs an Application

- Tools for Yarn Developers

- Managing Running Jobs

- Scheduling Hadoop Jobs

- Configuring the Fair Scheduler

- Impala Query Scheduling

- Apache Sqoop

- Sqoop and Its Uses

- Sqoop Processing

- Sqoop Import Process

- Sqoop Connectors

- Importing and Exporting Data from MySQL to HDFS

- Apache Flume

- Flume Model

- Scalability in Flume

- Components in Flume’s Architecture

- Configuring Flume Components

- Ingest Twitter Data

- Data Ingestion Overview

- Ingesting Data from External Sources with Flume

- Ingesting Data from Relational Databases with Sqoop

- REST Interfaces

- Best Practices for Importing Data

- Apache Kafka

- Aggregating User Activity Using Kafka

- Kafka Data Model

- Partitions

- Apache Kafka Architecture

- Setup Kafka Cluster

- Producer Side API Example

- Consumer Side API

- Consumer Side API Example

- Kafka Connect

- What is a Hadoop Client

- Installing and Configuring Hadoop Clients

- Installing and Configuring Hue

- Hue Authentication and Authorization

- Checking HDFS Status

- Copying Data between Clusters

- Adding and Removing Cluster Nodes

- Rebalancing the Cluster

- Cluster Upgrading

- The Motivation for Cloudera Manager

- Cloudera Manager Features

- Express and Enterprise Versions

- Cloudera Manager Topology

- Installing Cloudera Manager

- Installing Hadoop Using Cloudera Manager

- Performing Basic Administration Tasks using Cloudera Manager

- General System Monitoring

- Monitoring Hadoop Clusters

- Common Troubleshooting Hadoop Clusters

- Common Misconfigurations

- General Planning Considerations

- Choosing the Right Hardware

- Network Considerations

- Configuring Nodes

- Planning for Cluster Management

- Advanced Configuration Parameters

- Configuring Hadoop Ports

- Explicitly Including and Excluding Hosts

- Configuring HDFS for Rack Awareness

- Configuring HDFS High Availability

- What is MapReduce

- Basic MapReduce Concepts

- Distributed Processing in MapReduce

- Word Count Example

- Map Execution Phases

- Map Execution Distributed Two Node Environment

- MapReduce Jobs

- Hadoop MapReduce Job Work Interaction

- Setting Up the Environment for MapReduce Development

- Set of Classes

- Creating a New Project

- Advanced MapReduce

- Data Types in Hadoop

- Output formats in MapReduce

- Using Distributed Cache

- Joins in MapReduce

- Replicated Join

- Introduction to Pig

- Components of Pig

- Pig Data Model

- Pig Interactive Modes

- Pig Operations

- Various Relations Performed by Developers

- Introduction to Apache Hive

- Hive SQL over Hadoop MapReduce

- Hive Architecture

- Interfaces to Run Hive Queries

- Running Beeline from Command Line

- Hive Meta Store

- Hive DDL and DML

- Creating New Table

- Data Types

- Validation of Data

- File Format Types

- Data Serialization

- Hive Table and Avro Schema

- Hive Optimization Partitioning Bucketing and Sampling

- Non-Partitioned Table

- Data Insertion

- Dynamic Partitioning in Hive

- Bucketing

- What Do Buckets Do

- Hive Analytics UDF and UDAF

- Other Functions of Hive

- NoSQL Databases HBase

- NoSQL Introduction

- HBase Overview

- HBase Architecture

- Data Model

- Connecting to HBase

- HBase Shell

- Basics of Functional Programming and Scala

- Introduction to Scala

- Scala Installation

- Functional Programming

- Programming with Scala

- Basic Literals and Arithmetic Programming

- Logical Operators

- Type Inference Classes Objects and Functions in Scala

- Type Inference Functions Anonymous Function and Class

- Collections

- Types of Collections

- Operations on List

- Scala REPL

- Features of Scala REPL

- Apache Spark Next-Generation Big Data Framework

- History of Spark

- Limitations of MapReduce in Hadoop

- Introduction to Apache Spark

- Components of Spark

- Application of In-memory Processing

- Hadoop Ecosystem vs Spark

- Advantages of Spark

- Spark Architecture

- Spark Cluster in Real World

- Hadoop and the Data Warehouse

- Hadoop Differentiators

- Data Warehouse Differentiators

- When and Where to Use Which

- Introduction

- RDBMS Strengths

- RDBMS Weaknesses

- Typical RDBMS Scenario

- OLAP Database Limitations

- Using Hadoop to Augment Existing Databases

- Benefits of Hadoop

- Hadoop Trade-offs

- Advance Programming in Hadoop

- A Sample MapReduce Program: Introduction

- Map Reduce: List Processing

- MapReduce Data Flow

- The MapReduce Flow: Introduction

- Basic MapReduce API Concepts

- Putting Mapper & Reducer together in MapReduce

- Our MapReduce Program: Word Count

- Getting Data to the Mapper

- Keys and Values are Objects

- What is Writable Comparable

- Writing MapReduce application in Java

- The Driver

- The Driver: Complete Code

- The Driver: Import Statements

- The Driver: Main Code

- The Driver Class: Main Method

- Sanity Checking the Job’s Invocation

- Configuring the Job with Job Conf

- Creating a New Job Conf Object

- Naming the Job

- Specifying Input and Output Directories

- Specifying the Input Format

- Determining Which Files to Read

- Specifying Final Output with Output Format

- Specify the Classes for Mapper and Reducer

- Specify the Intermediate Data Types

- Specify the Final Output Data Types

- Running the Job

- Reprise: Driver Code

- The Mapper

- The Mapper: Complete Code

- The Mapper: import Statements

- The Mapper: Main Code

- The Map Method

- The map Method: Processing the Line

- Reprise: The Map Method

- The Reducer

- The Reducer: Complete Code

- The Reducer: Import Statements

- The Reducer: Main Code

- The reduce Method

- Processing the Values

- Writing the Final Output

- Reprise: The Reduce Method

- Speeding up Hadoop development by using Eclipse

- Integrated Development Environments

- Using Eclipse

- Writing a MapReduce program

- The Combiner

- MapReduce Example: Word Count

- Word Count with Combiner

- Specifying a Combiner

- Demonstration: Writing and Implementing a Combiner

- Introduction

- Sorting

- Sorting as a Speed Test of Hadoop

- Shuffle and Sort in MapReduce

- Searching

- Secondary Sort: Motivation

- Implementing the Secondary Sort

- Secondary Sort: Example

- Indexing

- Inverted Index Algorithm

- Inverted Index: Data Flow

- Aside: Word Count

- Term Frequency Inverse Document Frequency (TF-IDF)

- TF-IDF: Motivation

- TF-IDF: Data Mining Example

- TF-IDF Formally Defined

- Computing TF-IDF

- Word Co-Occurrence: Motivation

- Word Co-Occurrence: Algorithm

+ More Lessons

Mock Interviews

Phone (For Voice Call):

+91-971 152 6942WhatsApp (For Call & Chat):

+919711526942SELF ASSESSMENT

Learn, Grow & Test your skill with Online Assessment Exam to

achieve your Certification Goals

FAQ's

This specific course will hardly take 45-50 days to completely understand its functions.

Yes, they are hugely in demand. So, choosing this field will be beneficial for your career.

Yes, there are two ways of training, online/offline.

Yes, along with study material, you will also get access to our LMS Portal which will help you review your sessions.

- - Build an Impressive Resume

- - Get Tips from Trainer to Clear Interviews

- - Attend Mock-Up Interviews with Experts

- - Get Interviews & Get Hired

If yes, Register today and get impeccable Learning Solutions!

Training Features

Instructor-led Sessions

The most traditional way to learn with increased visibility,monitoring and control over learners with ease to learn at any time from internet-connected devices.

Real-life Case Studies

Case studies based on top industry frameworks help you to relate your learning with real-time based industry solutions.

Assignment

Adding the scope of improvement and fostering the analytical abilities and skills through the perfect piece of academic work.

Lifetime Access

Get Unlimited access of the course throughout the life providing the freedom to learn at your own pace.

24 x 7 Expert Support

With no limits to learn and in-depth vision from all-time available support to resolve all your queries related to the course.

Certification

Each certification associated with the program is affiliated with the top universities providing edge to gain epitome in the course.

Showcase your Course Completion Certificate to Recruiters

-

Training Certificate is Govern By 12 Global Associations.

-

Training Certificate is Powered by “Wipro DICE ID”

-

Training Certificate is Powered by "Verifiable Skill Credentials"

.webp)

Master in Cloud Computing Training

Master in Cloud Computing Training